Why Survey Length Is Sometimes Fake

You’re three minutes into a survey. They asked your age, your income, your zip code, whether you own or rent, how many people live in your household, and if you’ve purchased toothpaste in the last six months.

The little progress bar says you’re 15% done.

You do the math in your head. Three minutes for 15%. That means this whole thing is going to take 20 minutes that 20 minute is a lie.

I’ve been in the survey game long enough to know how this works. I’ve built surveys, I’ve tested surveys, and I’ve watched people click through them while I sat on the other side of a one-way mirror and took notes on where they got bored and started clicking randomly just to make it stop.

And I’m telling you right now, the estimated completion time on most surveys is fake sometimes, here’s why.

The Math Problem

Most surveys tell you they’ll take “about 5 minutes.” But look at what they’re actually asking.

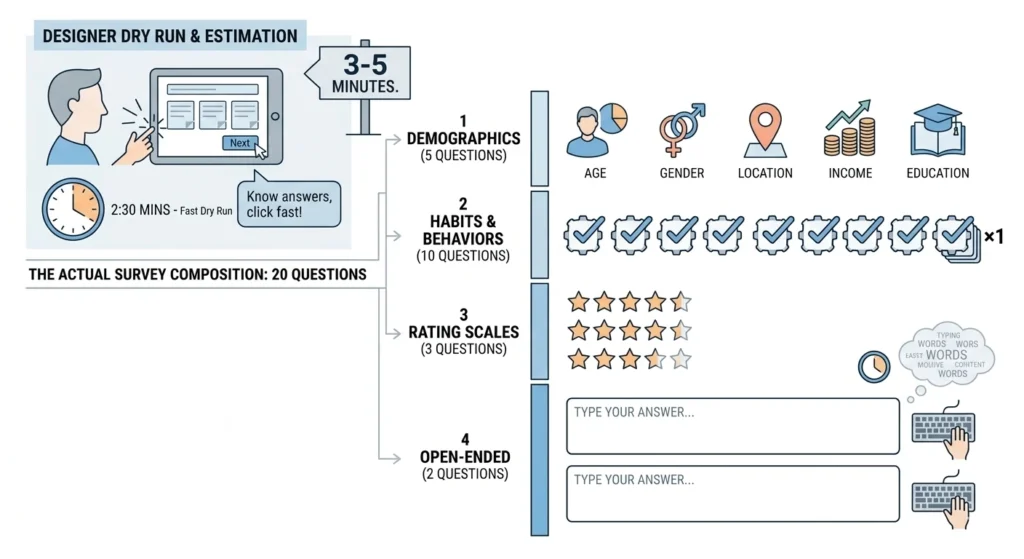

Let’s say a survey has 20 questions that includes:

- 5 demographic questions (age, gender, location, income, education)

- 10 multiple choice questions about your habits

- 3 rating scale questions (on a scale of 1-5…)

- 2 open-ended questions where you have to type something.

Now, the person building the survey does what’s called a “dry run.” They click through it themselves in 2 minutes and 30 seconds. They know what they’re asking, they know what they want and they click fast.

So, they set the estimate at 3 or 5 minutes.

But here’s what actually happens when a real person takes it:

| Action | Time |

|---|---|

| Read the question | 5-10 seconds |

| Consider the options | 3-5 seconds |

| Click an answer | 1 second |

| Move to next page | 2-3 seconds load time |

| Repeat 20 times | …it adds up |

A 20-question survey that takes 2.5 minutes for the person who wrote it takes 6-8 minutes for a normal human being who’s never seen these questions before and has to actually think about them.

The International Tobacco Control Policy Evaluation Project, which runs massive international surveys, found that their early estimation methods required 35-70 hours of staff time and still produced unreliable numbers.

Even when they test these things, the estimates are off. One study tested six different formulas for estimating survey length against a real 133-question survey. The estimates ranged from 7.6 minutes to 39.6 minutes. The actual completion time? 14 minutes

So right away, the estimate is off by a factor of two or three.

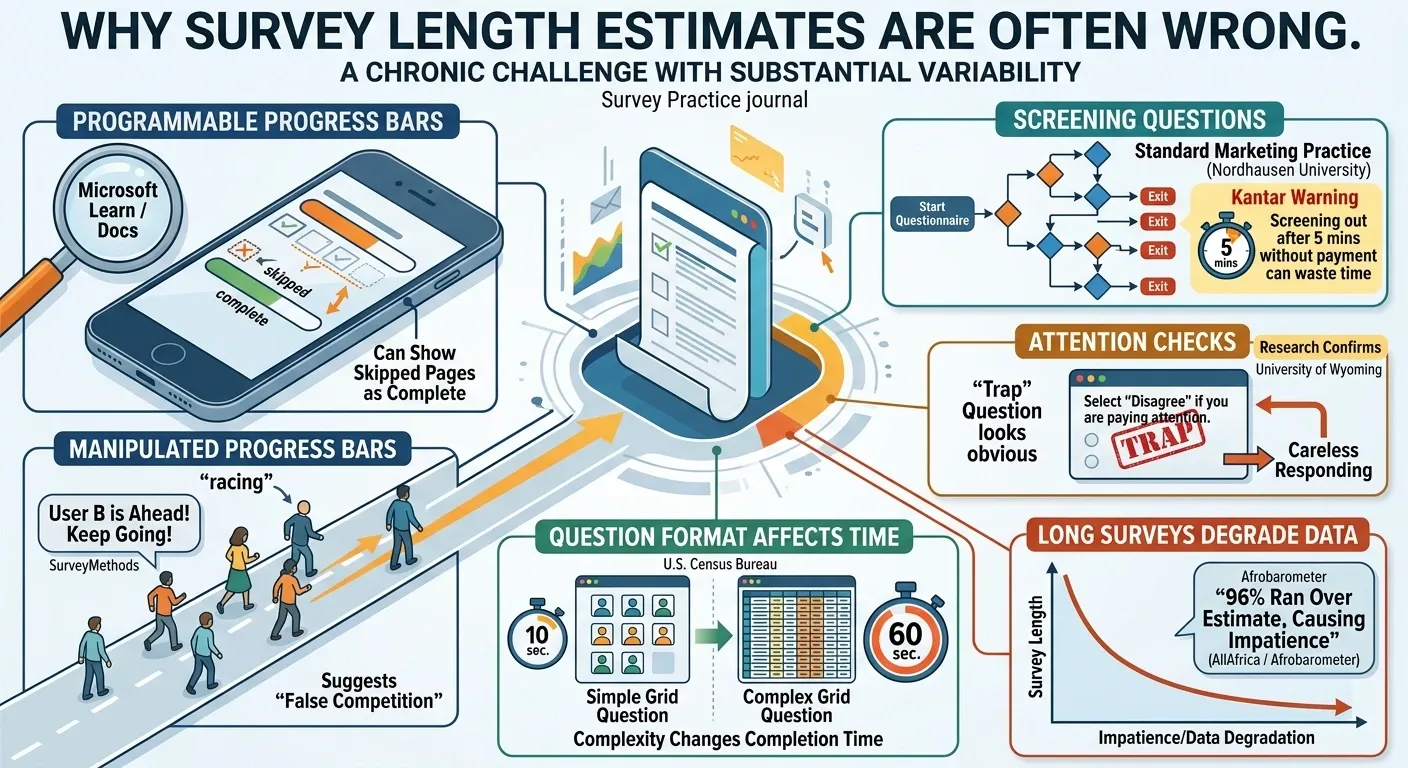

The Progress Bar Is Lying to You

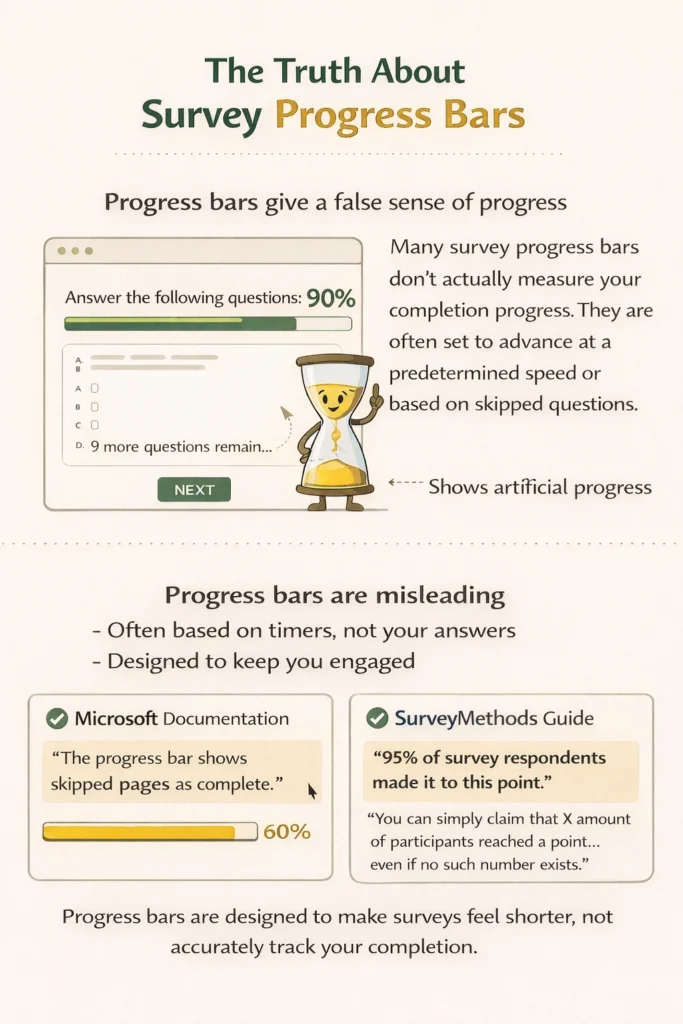

That progress bar at the bottom? The one that moves smoothly from 0 to 100%?

It’s not actually measuring your progress.

Sometimes it’s just a timer set to move at a certain speed regardless of how many questions you have left. You’ve been in those surveys where the bar hits 90% and you think you’re almost done, and then suddenly there are 12 questions in the page. The bar just… sits there at 90% for another five minutes.

Microsoft’s own documentation for Dynamics 365 Customer Voice, their survey platform, explains exactly how progress bars work: “The progress bar takes into account all pages in the survey. If pages have been skipped due to a branching rule, the progress bar shows the skipped pages as complete”.

If you skip pages because of branching logic meaning the survey automatically routes you past questions that don’t apply the progress bar counts those skipped pages as complete. So, you jump from 20% to 40% without answering anything.

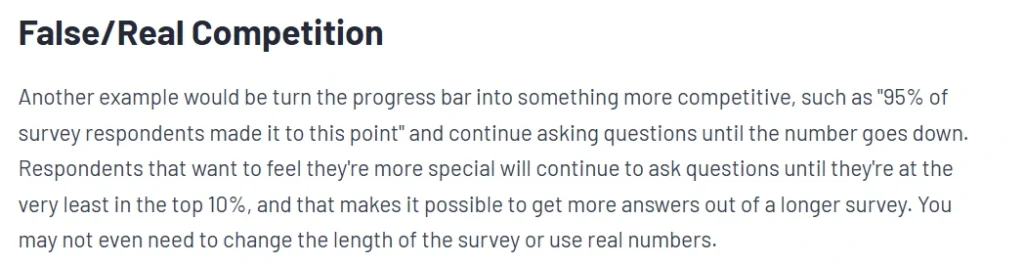

Some survey platforms take it even further. SurveyMethods, in their guide to “gamification,” suggests turning the progress bar into “something more competitive” by telling respondents that “95% of survey respondents made it to this point” as they keep going. They openly admit: “You can simply claim that X amount of participants reached a point… even if no such number exists”.

Other times, the progress is weighted by question type. Open-ended questions where you have to type count for less progress than multiple choice. So, you fly through 10 multiple choice questions, and the bar jumps to 60%. Then you hit one open-ended question, spend two minutes typing a thoughtful answer, and the bar moves to 62%.

The progress bar isn’t showing you how close you are to done. It’s showing you how close the survey company wants you to think you are, so you don’t close the tab.

Also Read: Is it Safe to use VPN while Completing Online Surveys?

The Screener Tax

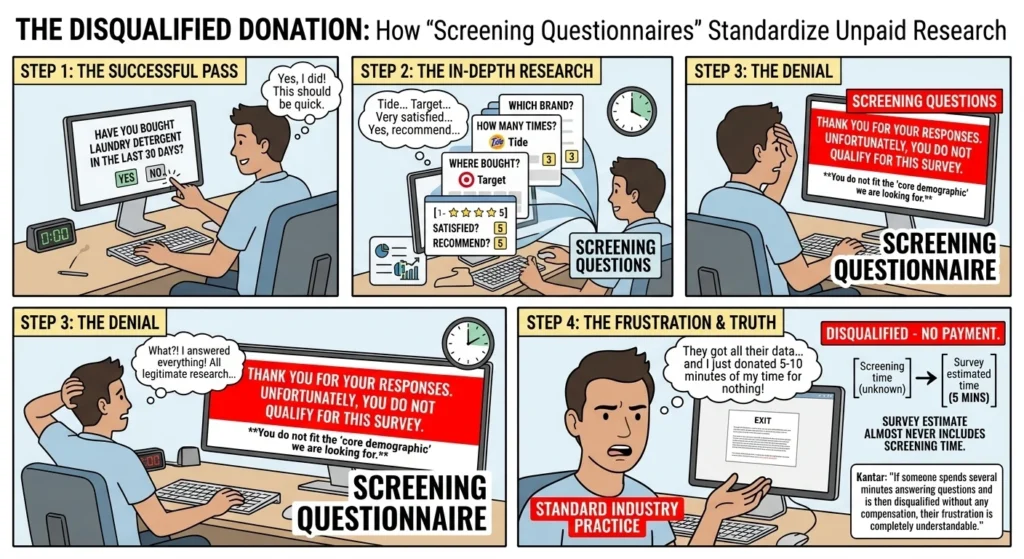

You start a survey they ask if you’ve bought laundry detergent in the last 30 days. You say yes, great. They ask what brand you say Tide. They ask how many times, they ask where you bought it, they ask if you’re satisfied, they ask if you’d recommend it.

Five minutes in.

Then they hit you with: “Thank you for your responses. Unfortunately, you do not qualify for this survey” what?

You just took five minutes of screening questions. They got all their data and learned about your laundry habits and then they kicked you out without paying because you don’t fit the “core demographic” they’re looking for.

This is called a “screening questionnaire” in the industry, and its standard practice.

Kantar points out that screening needs to be handled thoughtfully because if someone spends several minutes answering questions and is then disqualified without any compensation, their frustration is completely understandable.

The screening questions are legitimate research. But the survey length estimate? It almost never includes the time you spend getting screened out and if you do get screened out, you just donated 5-10 minutes of your time for nothing.

The Double Dip

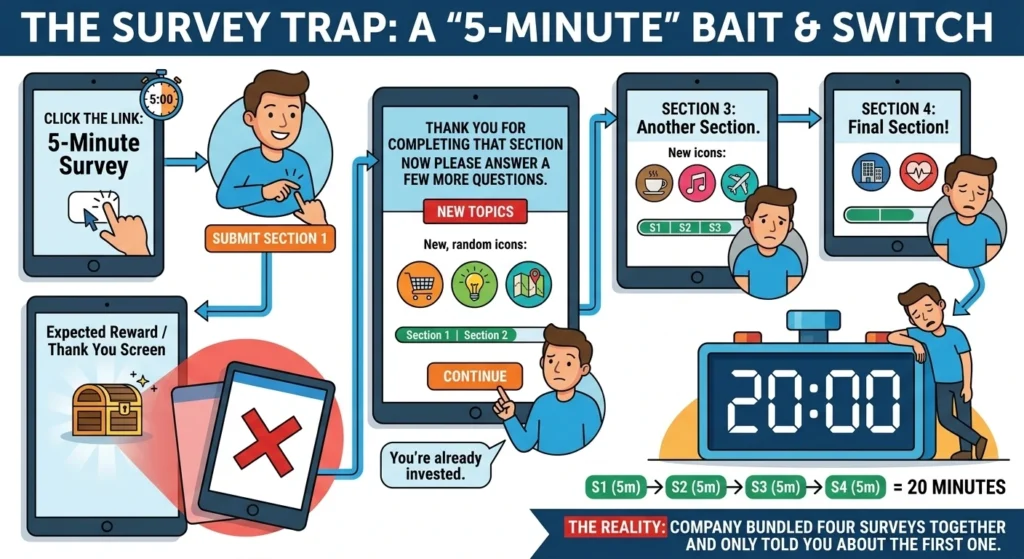

Sometimes surveys aren’t one survey at all. You click a link that says, “5-minute survey.”

You finish the questions and hit submit. You expect your reward or your “thank you” screen.

Instead, you get: “Thank you for completing that section now please answer a few more questions.”

And it’s a whole new set of different topics and format. But you’re already invested, so you keep going.

Then another section.

And another.

What started as a “5-minute survey” just turned into 20 minutes because the company bundled four surveys together and only told you about the first one.

The Data They Don’t Count

Here’s what survey length estimates don’t include:

- The 30 seconds it takes the page to load

- The 15 seconds you spend squinting at a poorly formatted question

- The minute you spend trying to remember exactly when you last bought peanut butter

- The 45 seconds you spend deciding whether “somewhat agree” or “agree” is more accurate

- The time it takes to enter your birth year by scrolling through a dropdown menu from 1920 to 2024

- The 20 seconds of “just kidding, that answer was invalid, please select again”

- The loading screen that freezes and makes you refresh and start over

All of that is real time none of it is in the estimate.

The U.S. Census Bureau, which thinks about survey design for a living, ran experiments on question format and completion time. They found that the amount of time needed to answer a survey question depends on how complex it is and the level of mental effort required from the respondent.

What Actual Testing Shows

Here’s what the data looks like when you compare estimated vs actual.

| Question Type | Estimator Time | Actual Time |

|---|---|---|

| Multiple choice (familiar topic) | 5 seconds | 8-12 seconds |

| Multiple choice (unfamiliar topic) | 5 seconds | 15-20 seconds |

| Rating scale (1-5) | 3 seconds | 6-10 seconds |

| Open-ended (short answer) | 15 seconds | 30-60 seconds |

| Open-ended (paragraph) | 30 seconds | 2-5 minutes |

| Demographic questions | 2 seconds each | 5-10 seconds each |

| Matrix questions (grid of ratings) | 10 seconds | 30-45 seconds |

A survey with 10 multiple choices, 5 rating scales, and 2 open-ended questions estimates to 5 minutes, but real people take 8-12 minutes.

Why They Do It

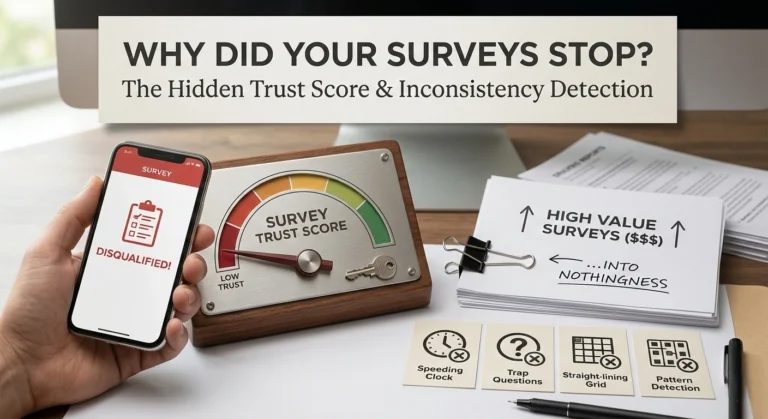

Sometimes survey companies lie about length to increase click through rate.

If a survey link said, “This will take approximately 18 minutes,” how many people would click it? Not many, the completion rate would tank.

But if it says, “5 minutes” and then takes 18, well, you’re already invested. You’re more likely to finish because you’ve already put in the time. Sunken cost fallacy, applied to market research.

Research confirms that “respondents are more likely to complete shorter surveys”. So, the incentive is clear: under promise on time, overdeliver on questions.

They’re not estimating, they’re sometime optimizing for starts.

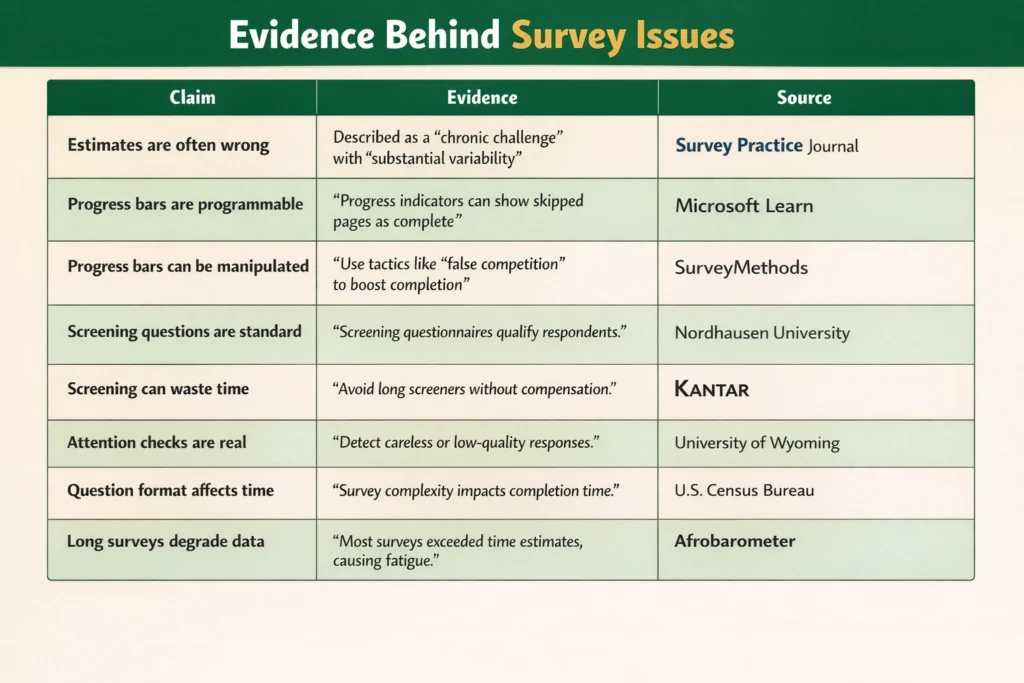

The Evidence Table

The One Exception

Sometimes surveys are actually accurate.

Usually these are:

- Surveys you take for work or internal purposes.

- High-end market research panels that pay decent money.

- Surveys that have been rigorously pre-tested with real users.

- Surveys that use “adaptive questioning” where the length actually depends on your answers.

But the random “tell us about your shopping habits” popup on a website? The one that says 3 minutes but has 40 questions? They are nonsense.

How to Spot the Lie

Here’s what I look for.

If the estimated time is a round number, be suspicious. “5 minutes” “10 minutes” “15 minutes.” Those are guesses. real testing produces weird numbers like “7-9 minutes” or “12-15 minutes.”

If the progress bar moves weirdly, they’re manipulating you. Bouncing from 20% to 50% to 55% to 90% to 92%? That’s not real progress, that’s psychology.

If the first five questions are demographics, they might be screening you for free. The real survey starts after they figure out if you qualify. The estimate rarely includes the screener time.

If there are open-ended questions, double the estimate. Every open-ended question adds at least a minute that the estimator ignores.

If it’s a “matrix” of questions (the grid where you rate 10 things on the same scale), add 50% to the estimate. Those take forever to read and process.

The Bottom Line

Survey length estimates are sometimes marketing, not math.

And the marketing ones are designed to get you to start, not to inform you of what’s coming. The company running the survey knows the real time, they have the data and have tested it. They just choose not to share it because honesty would hurt their completion rates.

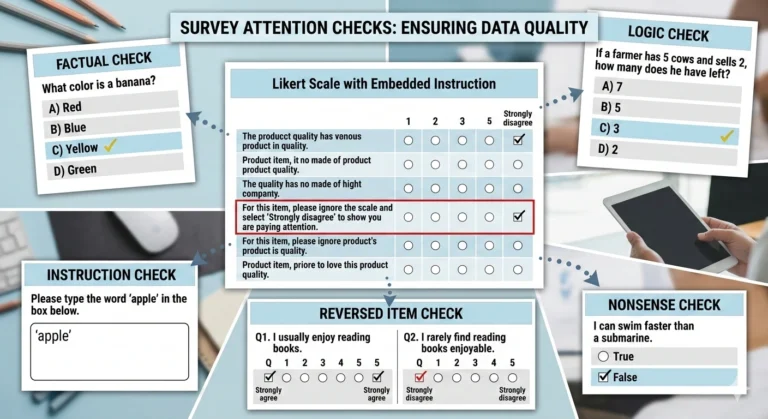

So next time you see “This survey will take about 5 minutes,” mentally double it. Triple it if there are open-ended questions. Add a buffer for loading times and attention checks.

Or just assume it’s going to take however long it takes and decide upfront whether that’s worth your time.

Because the number they gave you? Is probably fake.